I had the pleasure of open sourcing https://github.com/DataDog/chaos-controller, Datadog’s in-house chaos tooling for Kubernetes native disruptions.

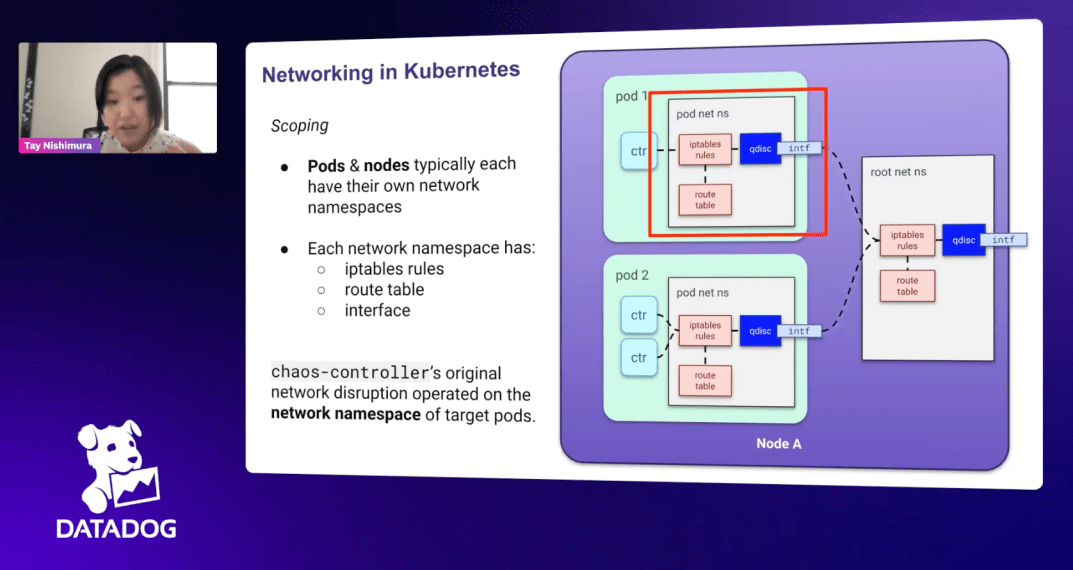

In this talk, my team lead Joris Bonnefoy and I share how network disruptions and CPU disruptions work underneath the hood all the way down to the networking interfaces of the Kubernnetes nodes and pods.

One of the most challenging aspects of onboarding to this codebase is understanding how the chaos controller manipulates network prio queues in order to create traffic bands that can be isolated from the rest of the network traffic and then disrupted using netem rules. My document (featured left) covers how it works.

After learning how to operate and modify chaos controller, I decided to try to create a disruption of my own. At Datadog, there had been a high volume of requests for an application-level disruption as our existing offerings focused on external factors to the application itself (such as noisy neighbors, network degradation, DNS degradation, and node failures).

Since most Datadog microservices rely on gRPC to communicate, we designed a disruption that triggers when an interceptor on the service listens to chaos requests from the application and depending on the configuration returns some percentage of application errors.